Hinge

Making AI Decidable

The Unknown

When I joined Hinge, leadership had already committed to exploring AI as a strategic direction.

What wasn’t clear was whether AI could exist responsibly in something as emotionally sensitive as dating.

The organization was stuck in abstract debate:

• Is AI manipulative in dating?

• Does it create inauthentic self-presentation?

• Does it replace human judgment or support it?

• Where does user agency actually live?

These conversations stalled progress because there was no shared way to evaluate AI behavior.

Everyone was arguing from values, intuition, or fear, not experience.

The real blocker wasn’t disagreement.

It was the absence of a decision-making substrate.

The Mission

I was not responsible for shipping an AI feature.

My role was to create the medium through which the organization could decide whether AI belonged in the product at all, and if so, under what constraints.

That meant:

Making AI behavior legible enough to evaluate

Helping leadership understand what I was good at vs. not good at in this space

Separating philosophical concerns from designable ones

Turning abstract fear into concrete, testable questions

In practice, I acted as a translator between values, systems, and experience. Designing the scaffolding that allowed real decisions to happen.

The Launch

Shifted the Question from “Should We Do AI?” to “How Should AI Behave?”

Instead of starting with features, I reframed the work around behavioral boundaries.

I helped the team move from “Is AI good or bad for dating?” to “What roles could AI play, and which roles break trust?”

This reframing allowed us to explore AI as a spectrum of behaviors, not a binary choice.

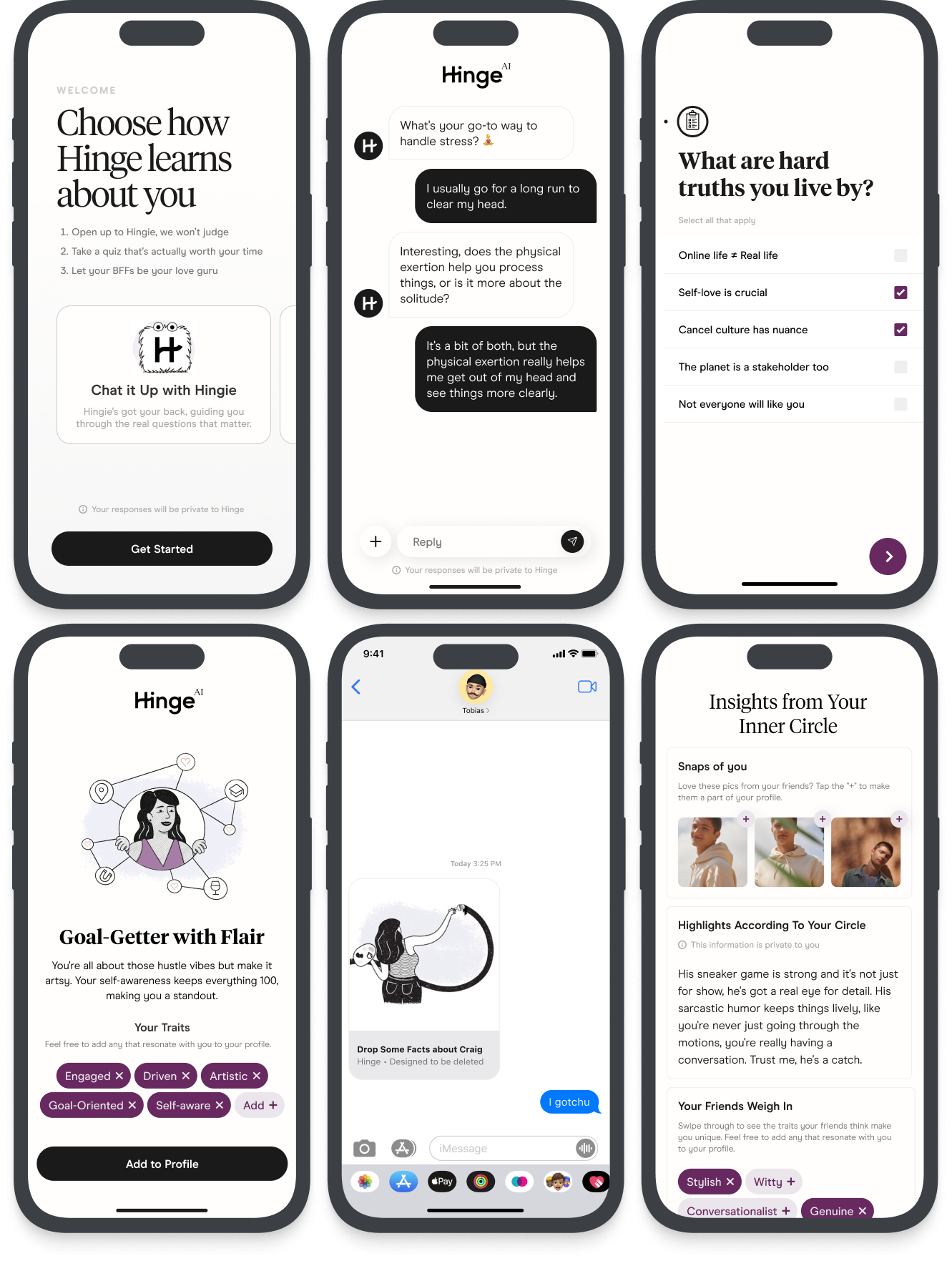

Designed a Set of Testable AI Interaction Concepts

To make AI discussable, I created a set of conceptual interaction models—each representing a different posture AI could take in dating.

These concepts were intentionally not production designs.

They were behavioral probes.

Each concept explored a different axis:

AI as interpreter vs. generator

AI as advisor vs. narrator

AI acting on behalf of users vs. alongside them

AI amplifying individuality vs. optimizing appeal

Visuals in the case study:

Full-width concept billboards showing each AI posture

Minimal UI, heavy emphasis on language, tone, and system intent

Clear labeling of what the AI does and does not decide.

Ran Concept Testing Focused on Trust, Agency, and Emotional Safety

We tested these concepts not to validate desirability, but to surface failure modes.

Instead of asking “Would you use this?”, we asked:

• Where does this feel uncomfortable?

• When does it cross a line?

• What feels supportive vs. invasive?

• When does AI feel like it’s speaking for you?

Participants reacted strongly to differences in timing, tone, and autonomy, even when functionality was similar.

This confirmed that AI behavior, not capability, was the core risk surface.

Synthesized Findings into a Shared Decision Framework

Rather than collapsing insights into recommendations, I formalized them into a shared evaluation system the org could reuse.

This resulted in the AI Feature Development Playbook.

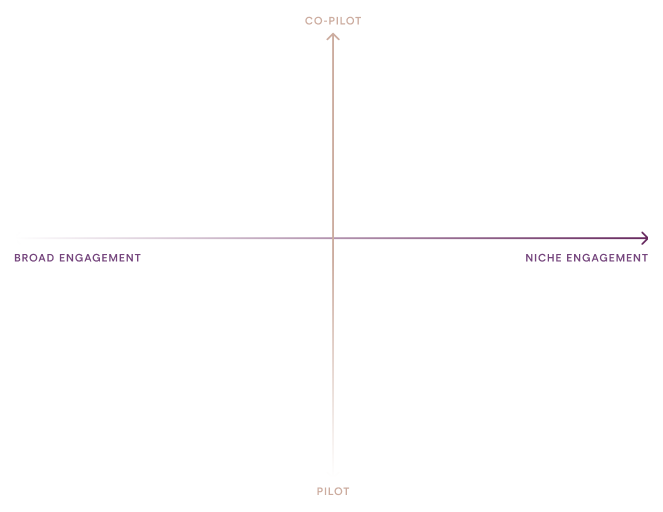

A Strategic Spectrum

I mapped AI concepts across two critical dimensions:

Autonomy (AI as co-pilot ↔ pilot)

Breadth of Engagement (broad appeal ↔ niche expression)

This made it immediately clear that different AI roles carry different risks, “AI” is not one thing and misalignment happens when teams mix quadrants unintentionally.

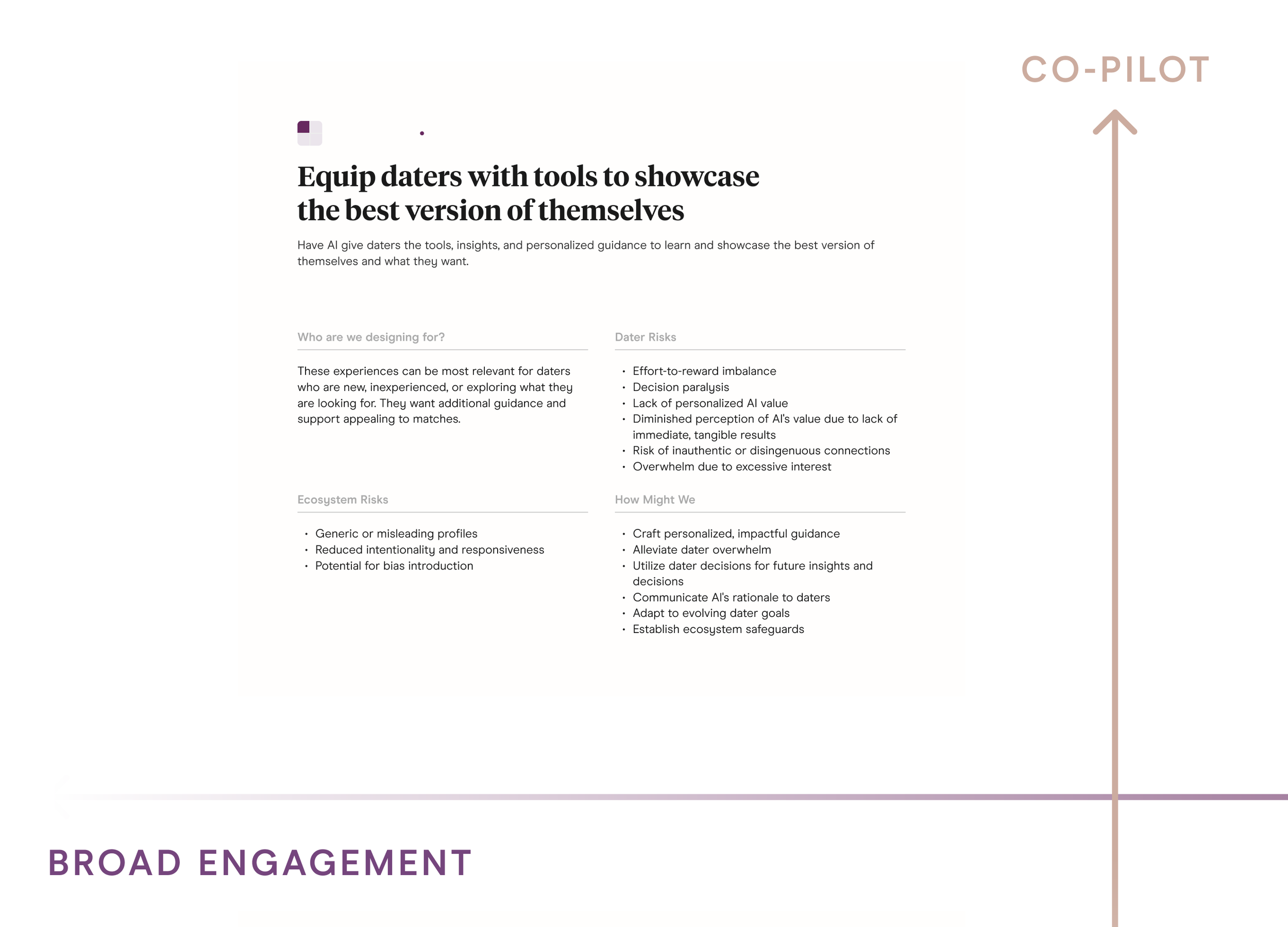

Explicit Risk Articulation

For each quadrant, I documented:

Dater risks (misrepresentation, loss of control, emotional harm)

Ecosystem risks (generic profiles, malicious behavior, bias amplification)

This reframed AI ethics from abstract values into design constraints.

Guidance Cards

To make this usable in practice, I created guidance cards teams could reference when exploring AI ideas.

Each card answered:

Who is this for?

What are the risks?

What safeguards are required?

What does responsible design look like here?

The Resolution

Without this intervention, AI would have remained theoretical.

This work closed the organization’s most fundamental AI question, could AI participate in a human relationship without eroding trust? After these concepts, spectrums, and guidance frameworks were shared, the nature of discussion inside Hinge changed. This work did not ship a feature. It did something more important, it made AI decidable inside the company.

This was the moment AI moved from abstraction to action at Hinge.

Before:

Teams debated whether AI belonged in dating

Concerns about manipulation, tone, and authenticity stalled progress

AI lived in theory, not product reality

After:

Alignment on clear boundaries for AI

Teams had shared language to evaluate AI features

The question shifted from “Should we do this?” to “Where and how do we apply this responsibly?”