Hinge

Making Exploration Executable

The Unknown

If this effort failed, AI would remain theoretical, and design would lose credibility as the function capable of shaping it.

When this work began, AI was new to Hinge.

There had been no real AI exploration yet. What existed were early ideas, mocked flows, and conceptual discussions. There was no shared understanding of what it would actually mean to design, test, or evaluate AI inside a dating product.

Design leadership felt increasing pressure to articulate an end-to-end point of view on AI at Hinge. Not a feature vision, but a coherent stance: how AI could exist responsibly, credibly, and usefully in a category defined by trust and identity.

In response, leadership organized a multi-week sprint, new in structure and ambition for the company, to force the conversation out of abstraction and into reality.

The risk was not just technical or product-level. Design itself was on the line.

The Mission

Act as connective tissue between exploration, infrastructure, research, Hinge Labs, and eventual product execution.

I took ownership of continuity and execution.

Not a single concept. Not a single feature. Not a single team.

My responsibility was to ensure this exploration did not collapse into slides, insights, or disconnected artifacts, and that it produced something real enough to carry forward after the sprint ended.

That meant:

Working across both sprint teams simultaneously

Owning the end-to-end arc, not just design outputs

Making AI concrete enough to be evaluated under real constraints

Ensuring the work could survive beyond the sprint and not die at handoff

In practice, I acted as connective tissue between exploration, infrastructure, research, Labs, and eventual product execution.

The Launch

Turning Concepts into Live Systems

Instead of relying on mocked AI behavior, I built end-to-end live prototypes:

Created real OpenAI endpoints myself, hosted via Heroku

Integrated those endpoints into interactive prototypes

Designed flows that exposed latency, tone, failure modes, and trust breakdowns

Tested these experiences on real people, not hypothetical users

This immediately surfaced issues that static designs could not, when AI felt helpful versus invasive, when timing mattered more than content, and where trust broke down.

Creating Infrastructure That Did Not Exist

To make real testing possible, I drove the creation of foundational infrastructure:

Built the first-ever internal Hinge prototyping API

Enabled prototypes to run on real user profile data under proper legal, privacy, and consent constraints

Established a repeatable pattern for safely testing AI with real data

Before this work, AI at Hinge could only be simulated. After this work, AI could be experienced.

Changing How Research and Decisions Happened

This infrastructure enabled something Hinge had never done before:

The first in-person research sessions using high-fidelity, live AI systems

Real users reacting, in real time, to AI behavior

Teams observing where excitement, discomfort, trust, and confusion actually emerged

One participant reaction was so viscerally positive that the clip was shared internally and is still referenced in company meetings.

For the first time, leadership saw people experience AI instead of talking about it.

Ensuring the Work Didn’t Die

After the sprint, I took full ownership of carrying the output forward into Hinge Labs so it could evolve rather than stall.

I transitioned the work into Labs without losing momentum

I shifted Labs from pure research outputs to shippable product experiences

I established Labs I.D.E.A. (Insights & Design Execution Assets) to make it easier for product teams to pick up and ship exploratory work

Labs became an execution engine, not just an insight generator.

Institutionalizing Conviction

To scale belief beyond the immediate teams, I designed and ran:

The first company-wide Labs “Science Fair”

A hands-on dogfooding session where multiple AI experiences were live

An environment where teams and executives compared tradeoffs by using products, not debating slides

This became a lasting tradition at Hinge and is still used before launching AI features.

The Resolution

This work permanently changed how AI progressed at Hinge.

Tangible Outcomes

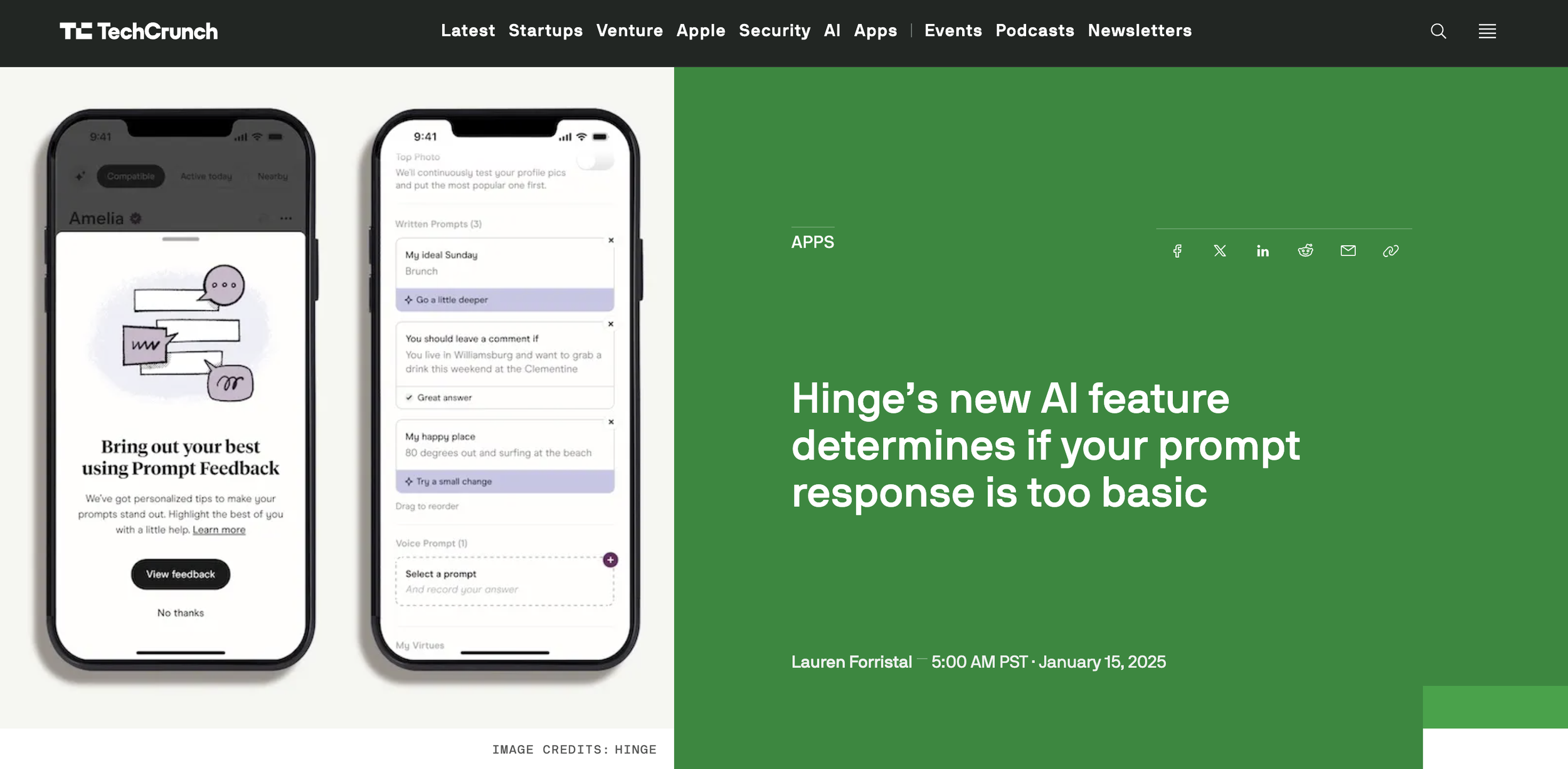

Enabled and shipped Prompt Feedback, Hinge’s first AI-powered feature

Enabled and accelerated “Warm Intros” feature

Established a clear path from AI exploration to production

This work closed a critical internal question, can high-risk AI exploration operate under real constraints and still ship? The answer became yes. This was the moment AI exploration at Hinge became institutionalized, not inspirational.

Without this intervention:

AI exploration would have stalled

Prompt Feedback would not have shipped

Warm Intros would not have accelerated

Science Fairs would not exist

Labs would not have evolved into an execution engine

Organizational Shift

AI exploration was expected to operate under real constraints

Labs was measured by execution, not curiosity

Product teams benchmarked their velocity against Labs

AI work could no longer hide behind theory